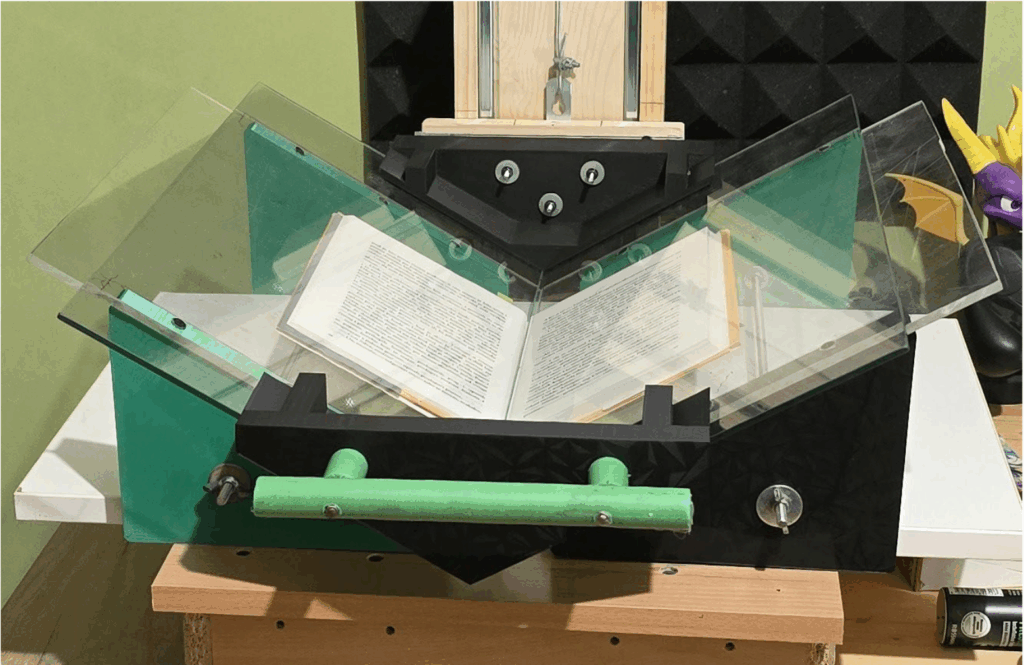

Mr. Igor Ćulafić successfully defended his master’s thesis titled “Cross-lingual Transfer Learning in Large Language Models: Scaling Laws and Parameter-Efficient Fine-Tuning for Multilingual Applications.” His research provides a comprehensive study of cross-lingual transfer for the Montenegrin language, combining a custom V-shaped semi-automated book scanner, a YOLOv11 + Tesseract OCR pipeline, and the creation of 46,661 parallel paragraph pairs. Using LoRA fine-tuning on Qwen2.5-7B and Qwen3-30B—executed on the Leonardo EuroHPC supercomputer—the work demonstrates parameter-efficient adaptation (only 1.05% trainable parameters) and offers insights into model behavior in cultural understanding, script mixing, and analytical reasoning. This research was supported by NCC Montenegro team and made use of the HPC cluster and EuroHPC JU computational resources.

ABSTRACT – This thesis presents a comprehensive study of Cross-lingual transfer learning in Large Language Models with a focus on parameter-efficient fine-tuning for the Montenegrinlanguage. The research integrates the development of a custom semi-automated book scanner with V-shaped design and a computer vision pipeline using YOLO v11 models and Tesseract OCR to digitize 5000 on Montenegrin and 40000 on English language, from public domain books, resulting in 46661 parallel paragraph pairs. Implementation of LoRA fine-tuning on Qwen2.5-7B and Qwen3-30B models was conducted on Leonardo HPC supercomputer, achieving memory efficiency with only 1.05% trainable parameters. Comparative analysis through a structured benchmark of ten progressively complex questions reveals limited but positive effects of fine-tuning, where larger models show better performance in cultural understanding and analytical tasks, while systematic analysis identifies specific problems such as script mixing and cultural inaccuracies that require specialized approaches.