Ms. Ivana Lalatović successfully defended her master’s thesis titled “Application of Explainable Artificial Intelligence in Medicine” at the Faculty of Information Systems and Technologies, University of Donja Gorica.

The defence took place in October 2025, and the thesis explored how modern XAI techniques—such as SHAP and LIME—can improve transparency and trust in AI models used for analysing the performance and reliability of medical respirators. The development, training, and testing of the machine learning and XAI workflows were supported by the high-performance computing (HPC) resources provided through the EuroCC initiative in Montenegro, enabling scalable data processing, faster experimentation, and reproducible analysis required for medical AI applications. Her work demonstrates how HPC-enabled explainability can strengthen the safety, reliability, and ethical use of AI in healthcare environments, contributing to the growing ecosystem of advanced AI research supported by NCC Montenegro.

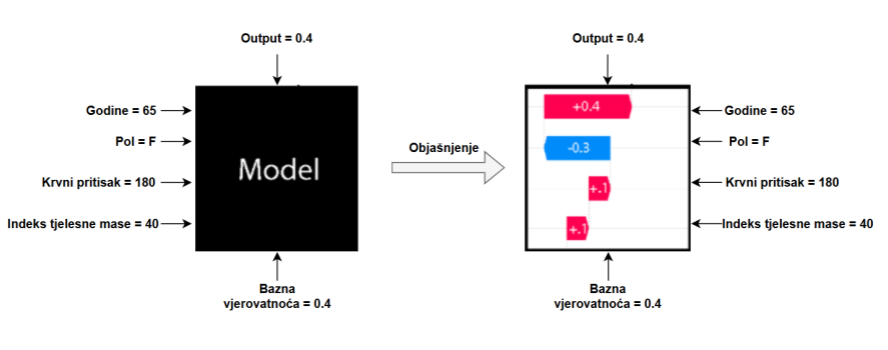

ABSTRACT – The need for explainable intelligent systems is growing along with the increase in artificial intelligence products used in everyday life. Explainable artificial intelligence (XAI) has experienced significant growth in the last few years. The reason for this is the wide application of machine learning, as well as deep learning techniques, which have led to the development of highly accurate models. However, they lack explainability and interpretability. This study explores the application of XAI methods in medical applications, with a particular focus on interpreting model decisions. SHAP and LIME methods were applied to interpret the model’s predictions, enabling the identification of key features that have the greatest influence on the model’s decisions. The results of this research confirm the importance of explainable artificial intelligence in critical domains such as medicine, where trust in AI systems must be based on understanding and verifiability of their decisions.