Gospođa Ivana Lalatović uspješno je odbranila svoj magistarski rad pod nazivom „Primjena objašnjive vještačke inteligencije u medicini“ na Fakultetu informacionih sistema i tehnologija Univerziteta Donja Gorica.

Odbrana je održana u oktobru 2025. godine, a teza je istraživala kako moderne XAI tehnike – poput SHAP-a i LIME-a – mogu poboljšati transparentnost i povjerenje u AI modele koji se koriste za analizu performansi i pouzdanosti medicinskih respiratora. Razvoj, obuka i testiranje radnih procesa mašinskog učenja i XAI-a podržani su resursima računarstva visokih performansi (HPC) obezbijeđenim kroz EuroCC inicijativu u Crnoj Gori, omogućavajući skalabilnu obradu podataka, brže eksperimentisanje i reproducibilnu analizu potrebnu za medicinske AI primjene. Njen rad pokazuje kako objašnjivost omogućena HPC-om može ojačati sigurnost, pouzdanost i etičku upotrebu AI u zdravstvenim okruženjima, doprinoseći rastućem ekosistemu naprednih istraživanja AI koje podržava NCC Crna Gora.

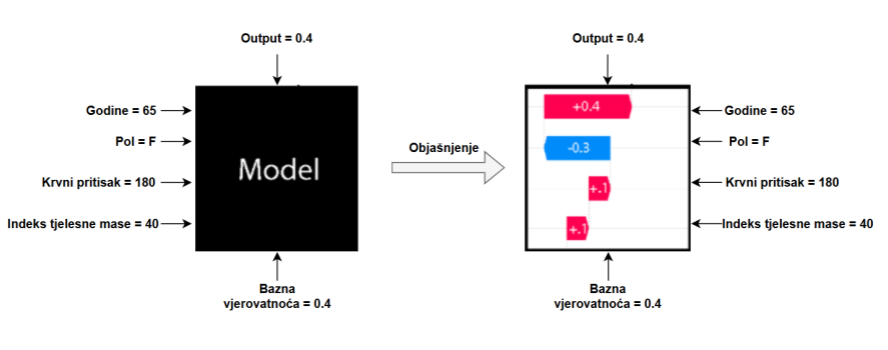

ABSTRAKT – The need for explainable intelligent systems is growing along with the increase in artificial intelligence products used in everyday life. Explainable artificial intelligence (XAI) has experienced significant growth in the last few years. The reason for this is the wide application of machine learning, as well as deep learning techniques, which have led to the development of highly accurate models. However, they lack explainability and interpretability. This study explores the application of XAI methods in medical applications, with a particular focus on interpreting model decisions. SHAP and LIME methods were applied to interpret the model’s predictions, enabling the identification of key features that have the greatest influence on the model’s decisions. The results of this research confirm the importance of explainable artificial intelligence in critical domains such as medicine, where trust in AI systems must be based on understanding and verifiability of their decisions.